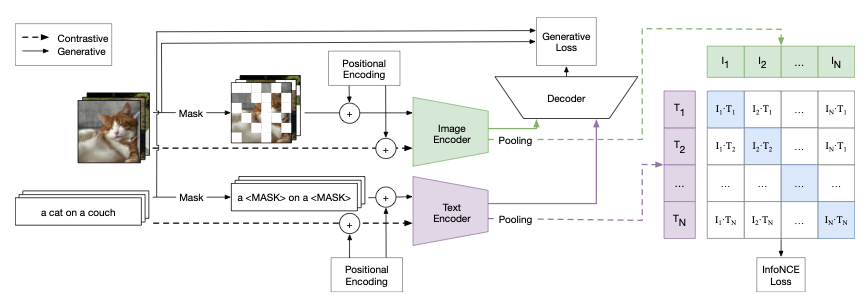

@inproceedings{weers2023mae_clip,

title={Self Supervision Does Not Help Natural Language Supervision at Scale},

author={Weers, F. and Shankar, V. and Katharopoulos, A. and Yang, Y. and Gunter, T.},

booktitle={{Proceedings IEEE Conf. on Computer Vision and Pattern Recognition (CVPR)}},

year={2023},

url={https://arxiv.org/pdf/2301.07836}

}Angelos Katharopoulos

Hi! I am currently at the Machine Learning Research group at Apple. I am interested in making deep neural networks train with less compute, less memory and less samples.

I completed my PhD at Idiap Research Institute and EPFL under the supervision of François Fleuret.

Previously, I studied electrical engineering in Aristotle University of Thessaloniki and I worked with Christos Diou and Anastasios Delopoulos.